Stay connected with BizTech Community—follow us on Instagram and Facebook for the latest news and reviews delivered straight to you.

OpenAI has introduced two new streamlined versions of its flagship model family — GPT-5.4 Mini and GPT-5.4 Nano — as it moves to meet growing demand for faster and more cost-efficient artificial intelligence systems.

The models, announced on 18 March 2026, are designed for high-volume, real-time use cases, offering lower latency and reduced costs while maintaining much of the performance associated with larger models in the GPT-5.4 series.

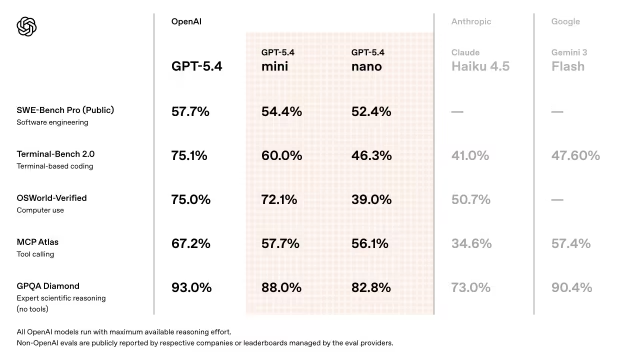

According to the company, GPT-5.4 Mini delivers a significant improvement over earlier lightweight versions and approaches the performance of the full GPT-5.4 model across a range of tasks. GPT-5.4 Nano, by contrast, is optimised for more targeted functions such as classification, data extraction and ranking, where speed and efficiency are prioritised over complexity.

Both models support a one-million-token context window in beta, enabling them to process lengthy documents and extended conversations without losing coherence — a capability increasingly sought after in enterprise and developer applications.

Focus on Efficiency and Scale

OpenAI said the new releases are aimed at addressing the rising cost of deploying AI systems at scale, particularly as businesses integrate such tools into customer service, analytics and automation workflows.

GPT-5.4 Mini is available from $0.075 per million input tokens and $0.30 per million output tokens, while GPT-5.4 Nano is offered at even lower rates for simpler tasks.

The models retain advanced reasoning capabilities but operate with reduced computational requirements, allowing for faster deployment and lower infrastructure costs — a key consideration as demand for inference, or real-time AI responses, continues to grow.

Capabilities and Use Cases

Both variants support multimodal inputs, including text, images and audio, and integrate with OpenAI’s broader ecosystem, including application programming interfaces and enterprise tools.

Early benchmark results suggest GPT-5.4 Mini performs close to the full model on widely used tests such as MMLU, which measures general knowledge, and HumanEval, a coding benchmark. GPT-5.4 Nano, meanwhile, is geared towards tasks requiring minimal latency, such as filtering content, extracting structured information and ranking search results.

Developers are expected to deploy the models in a range of applications, from chatbots and content moderation systems to summarisation tools and more complex “agentic” workflows, where AI systems carry out multi-step tasks.

OpenAI said the models have undergone extensive safety testing, including red-teaming exercises and bias evaluations, as part of its ongoing efforts to reduce harmful outputs.

Intensifying Competition

The launch comes amid escalating competition in the AI sector. Rivals, including Google and Anthropic, have released increasingly advanced models, while Chinese developers such as DeepSeek continue to expand open-source alternatives.

OpenAI’s strategy appears focused on capturing the growing market for high-frequency, cost-sensitive applications, where efficiency and performance-per-dollar are becoming more important than absolute model size.

Investor sentiment was broadly positive following the announcement, with secondary market valuations for OpenAI showing modest gains. Across the wider technology sector, however, volatility persists as companies weigh the high costs of AI development against uncertain returns.

A Shift Towards Practical Deployment

The release of smaller, more efficient models reflects a broader shift in the AI industry. As adoption accelerates, the emphasis is moving from building ever-larger systems to deploying models that can operate reliably and affordably at scale.

As 2026 unfolds, analysts expect continued rapid iteration across the sector, with efficiency, accessibility and real-world usability emerging as the defining factors in the next phase of artificial intelligence development.

Read Also: OpenAI Accuses China’s DeepSeek of Training AI by Distilling US Models